Foundry Economics in the AI Age

Why Leading-Edge Economics Have Inverted, and What It Means for the Supply Chain

A fresh perspective is needed on foundry economics at the leading edge. For years, the industry lacked a clear cost-per-transistor framework, even as the economic foundation of Moore’s Law was becoming less durable. That framework held through N3E. It no longer holds at N2 and A16, and the evidence is now strong enough to reframe the investment case. More importantly, the break did not begin only at the newest nodes. The engineering cadence of Moore’s Law continued, but the economic logic behind it began to fade years ago. Cost per transistor had already become flat, with only marginal improvement, across leading-edge transitions. What our work now shows is that the curve has moved beyond flattening and into outright inversion. Anyone who has studied the semiconductor industry for any length of time knows this is important because the industry was built not just on scaling density, but on the expectation that each new node would make compute cheaper to manufacture. Once that stopped being true, each new node increasingly needed to be justified by the value of the end workload rather than by automatic cost reduction alone.

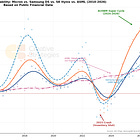

Our proprietary front-end cost model shows that the cost to manufacture a billion transistors on a large monolithic AI die rises from roughly ~$5.4 at TSMC’s N5 node into the high-single-digit to low-teens range at A16. We view roughly ~$11 as the middle of a plausible range that holds across reasonable variation in wafer pricing, large-die yield, and effective transistor density. The strongest claim is the slope, not the exact level (given we needed to triangulate a ton of data): across three node generations, the cost curve has moved decisively upward rather than down. That directional shift marks the inversion of the economic engine that powered five decades of semiconductor progress. For most of that period, each shrink reliably lowered cost per transistor, and the industry built its growth model around that assumption. For the AI-exposed portion of the stack, that assumption now needs to be retired.

The inversion starts with the cost of building leading-edge capacity itself. Capital intensity per 50,000 wafers per month of advanced capacity has risen from roughly $16 billion at N5 to an estimated $28 to $30 billion at N2 and A16. Equipment reuse rates, which ran 60 to 70 percent at the last major transition (covered in our WFE report), are collapsing at the newest nodes because the shift to gate-all-around architecture and backside power delivery renders much of the existing installed base obsolete. Yield pressure remains one of the clearest reasons cost is rising at the leading edge. Very large AI chips already lose a meaningful share of output before they ever become finished products. When those chips are then paired with stacked high-bandwidth memory, the economics become even less forgiving because each additional layer creates another opportunity for usable output to fall away. In practical terms, memory dies that look healthy on their own can still produce a much lower effective yield once they are assembled into a full stack, even before packaging losses are included.

What stands out to us is that this cost inflection is arriving at the same moment AI demand is redefining what advanced compute is worth. In a different era, this kind of cost structure may have forced a much broader pause at the leading edge. If not a pause, at least a drastic slowing as each new node would have a hard time being justified. Instead, AI has become the first end market large enough, urgent enough, and economically important enough to absorb it. The procurement framework at the top of the infrastructure stack has evolved from cost per transistor to throughput per watt, tokens per dollar of deployed infrastructure, and revenue capacity per cluster. The relevant question is whether the system generates enough economic return to justify the silicon premium, and for AI training and inference at scale the answer remains clearly affirmative. Visibility into GPU demand extends well into 2027/28 with gross margins maintained in the mid-70s, and high-bandwidth memory supply is essentially spoken for into 2028.

The implication is that cost inflation and value inflation are occurring simultaneously. The leading edge is becoming a premium manufacturing tier rather than a universal cost-down path, and the suppliers that benefit most are the ones converting scarce, expensive silicon into systems valuable enough that buyers do not negotiate on the per-transistor premium. In that sense, AI did not restore the old economics of Moore’s Law. It introduced a new economic rationale for scaling after the original one had already weakened. We view that shift as highly significant for the broader semiconductor industry. Without AI, the loss of economic Moore’s Law would likely have imposed a much harder ceiling on how far and how fast the leading edge could continue to advance. Equipment spending reflects this shift. WFE is projected to reach $125 to $145 billion (some estimates $170+ B!) this year, driven less by the number of new fabs and more by the tool intensity required at each successive node.

Where the value accrues, which end markets can absorb the rising cost, how the memory stack reinforces the same thesis, and what signals would change our view are the subjects of the full report. We develop the foundry TAM and WFE market framework separately in our companion reports on the capacity buildout.

What subscribers get in the full report:

Our proprietary front-end cost model with node-by-node economics from N7 through A16, including cross-foundry comparisons for Intel 18A, Intel 14A, and Samsung SF2

The complete memory cost framework: commodity DRAM, NAND, HBM3e, and HBM4 with yield-layer decomposition and wafer trade ratio analysis

A nine-segment value capture matrix identifying where pricing power, margin expansion, and durability sit across the AI semiconductor supply chain

End-market justification table showing which product categories can absorb rising silicon cost and which cannot

WFE structural consequence analysis: why equipment intensity is rising even as the number of qualifying end markets shrinks

Tiered stock positioning: best expressions, debated names, and where the thesis is crowded or conditional

Five concrete proof points to watch over the next two to three quarters

Full model methodology appendix