Microsoft’s AI Capex Is Buying the Enterprise Agent Stack

How compute allocation turns AI infrastructure spend into enterprise software ARPU

After completing AI growth thesis reports on Amazon and Google, both of which held up very well in light of both companies’ earnings reports yesterday, we now turn our attention to Microsoft.

Microsoft’s AI debate has been too centered on the visible parts of the model: Azure capacity, GPU availability, capex, and cloud gross margin. Those are the right places to start, but they do not fully explain the growth thesis. The issue we keep coming back to is allocation. Microsoft does not have unlimited deployable AI capacity, and every GPU pushed toward one workload has an opportunity cost somewhere else. Some capacity is sold externally through Azure. Some support OpenAI-related demand. Some is consumed internally by Microsoft 365 Copilot, GitHub, Fabric, Foundry, Dynamics, Security, and the agent-governance layer now forming across the enterprise stack. That allocation decision is where the earnings power debate sits.

Q3 gave more support to the view that Microsoft’s AI numerator is expanding. AI annualized revenue run rate surpassed $37 billion, Azure grew 40%, and Microsoft 365 Copilot crossed 20 million paid seats. We would not treat those as separate proof points. They point to the same mechanism: Microsoft is turning AI capacity into infrastructure consumption, software seats, developer usage, data-platform pull-through, and agent tooling. This is why Azure-only return math is the wrong singular focus. It captures the most visible revenue stream, while missing the monetization that shows up across the software estate.

We understand the attention high fixed costs get as a part of this cycle. Capex remains elevated, Q4 spend is guided higher, and calendar-year 2026 capex is expected to reach roughly $190 billion, including about $25 billion tied to component inflation rather than incremental capacity. Microsoft Cloud gross margin compressed to 66%, with Q4 guided to roughly 64%. We recognize the margin pressure, but the key question is whether Microsoft’s software monetization scales quickly enough to offset the denominator the market can already see. If Microsoft can convert scarce compute into Microsoft 365 ARPU, GitHub usage, Fabric consumption, Security attach, and agent-governance revenue, this becomes a broader enterprise software monetization cycle rather than a pure Azure capacity build.

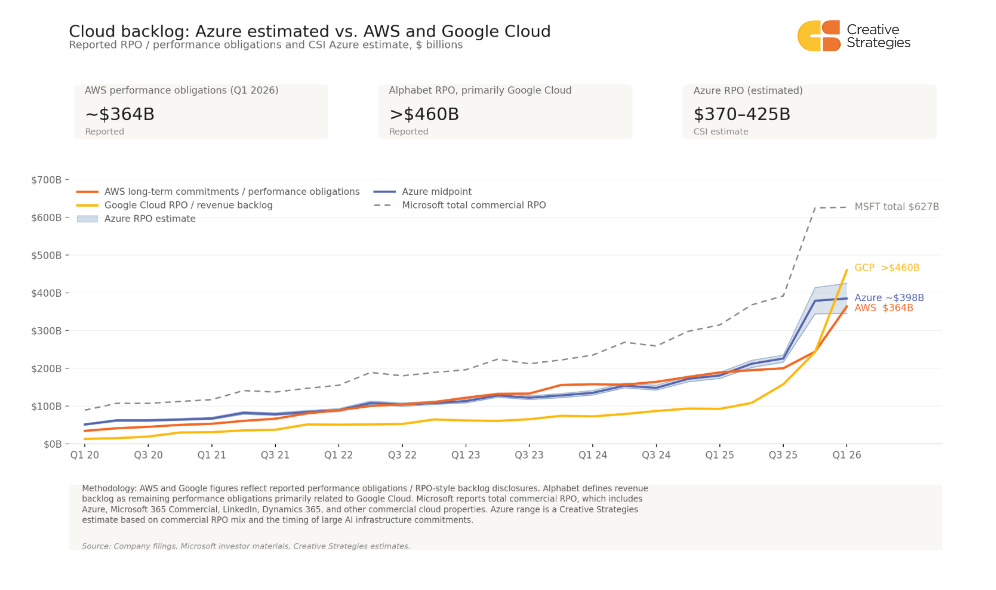

The broader cloud RPO and backlog data help explain why the debate over whether the AI infrastructure cycle is durable remains flawed. Across AWS, Google Cloud, and Microsoft, the forward revenue base tied to cloud and AI infrastructure has moved from steady compounding into a steeper AI-era slope. The numbers are not perfectly comparable: AWS discloses long-term performance obligations primarily related to AWS, Alphabet reports remaining performance obligations, or “revenue backlog,” primarily related to Google Cloud, and Microsoft reports total commercial RPO rather than Azure-specific backlog. Even with those caveats, the direction is useful. AI demand is now showing up in contracted revenue visibility across the major cloud platforms, adding another layer of evidence beyond management commentary, quarterly growth rates, and capacity announcements.

The backlog inflection provides the industry evidence behind the durability debate. AWS and Google confirm that AI infrastructure demand is broadening across hyperscale cloud, but Microsoft’s growth thesis turns on a familiar question: whether scarce compute allocated internally can convert into software ARPU, usage meters, Fabric pull-through, security attach, and agent-governance control points. That is why the Microsoft debate cannot stop at backlog, Azure growth, or capacity additions.

The first-party allocation thesis remains a key part of the core angle. Our working model assumes roughly 30% of newly deployed GPU capacity is going to first-party workloads and roughly 70% to external Azure customers, triangulated from channel work and consistent with Q3 commentary. Internal capacity allocated to Microsoft 365 Copilot, GitHub Copilot, Foundry, and Dynamics AI does not appear as third-party Azure revenue. It monetizes through seat ARPU, data pull-through, workflow attach, and governance. The cost is visible in cloud gross margin today, while the software return is still scaling across Microsoft 365 Commercial Cloud and the broader enterprise estate.

Customer evidence is moving in Microsoft’s direction, but adoption is still early. CIO and channel work places AI at roughly 8–10% of IT budgets, with most large organizations now carrying a dedicated AI budget line. Funding is coming from both reallocation and incremental budget, and the reallocation pressure appears heavier on IT services and systems integrators than on cloud infrastructure or cybersecurity. That mix favors Microsoft’s protected cloud and security layers and its packaged AI software model. The constraint is production maturity, with channel checks still showing GenAI rollout in the single digits and most customers in pilot phases.

We understand the risks that still exist. Microsoft Cloud gross margin is under pressure, component inflation is now a material capex variable, and bookings will remain noisy as the OpenAI comparison rolls through the model. The revised OpenAI partnership improves Microsoft’s economics on OpenAI-derived workloads through retained equity, revenue-share payments through 2030 subject to a cap, continued non-exclusive IP rights through 2032, and the removal of Microsoft’s revenue-share obligation to OpenAI. The offset is that OpenAI now has more freedom to serve products across other clouds.

We would evaluate Microsoft’s AI capex as a portfolio monetization cycle, not only as an Azure capacity cycle. Azure is the infrastructure base, but the return path runs through Microsoft 365 Copilot, E5, the announced E7 frontier suite, Fabric, GitHub, Dynamics, Security, and the Agent 365 / Copilot Studio governance layer. The infrastructure cost is already visible in the income statement, while software and governance ARPU remain earlier in their curve. The next four quarters should show whether Microsoft’s user-plus-usage transition accelerates faster than depreciation pressure weighs on cloud gross margin. Microsoft’s AI capex is buying the foundation for a broader enterprise software pricing and control layer.

What subscribers get in the full note

Full multi-vector AI ROIC framework, including why Azure-only return math understates the numerator after the Q3 print.

Updated earnings analysis covering AI ARR, Azure growth, capex composition, capacity, and the OpenAI restructure.

First-party versus third-party GPU allocation, refreshed with the Q3 allocation language.

Customer-side validation triangulated across CIO, partner, and channel work on AI budget formation, vendor positioning, and deployment maturity.

Azure growth, capex, margin, and capacity model, including component-price disclosure and Q4 trajectory.

Copilot, E5, and the E7 frontier suite as a layered ARPU model.

Fabric and the data pull-through layer underneath enterprise agents.

Agent 365 and Copilot Studio as the enterprise agent governance and control plane.

OpenAI concentration, the new revenue-share structure, multi-cloud risk, and Anthropic integration.

Competitive framework versus AWS and Google, scenario framework, and a metric monitoring dashboard for the next four quarters.