Google’s AI Capex Is Being Measured Against the Wrong Revenue Line

Google’s AI Capex Is Being Measured Against the Wrong Revenue Line

We attended Google Cloud Next this week, sat through the key sessions, and spent time with management, partners, customers, and the broader ecosystem. What follows is our framework for evaluating Google’s business as a whole through the lens of its current AI capital cycle.

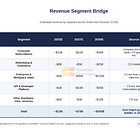

The market’s debate around Google has settled around a question that captures the visible part of the investment cycle, but not the full return structure underneath it. Can the company earn an acceptable return on roughly $180 billion of 2026 capex if that spend is measured against cloud AI revenue alone? We think that framing leaves out too much of where the infrastructure is already monetizing.

We think that framing misses the structure of the business Google is actually building (has been building).

The same infrastructure supporting Gemini inside GCP also powers Search, lifts ad yield, expands Workspace, and increasingly sits underneath agentic software and orchestration layers that do not show up neatly in a single “AI revenue” bucket. From there, the more useful question becomes whether Google’s AI stack is generating returns across enough monetization surfaces at once to make the capital cycle more rational than the market currently assumes.

As the evidence has started to broaden beyond management commentary, the debate has become easier to frame. In the fourth quarter of 2025, Google Cloud grew 48% year over year and backlog doubled to $240 billion. Search revenue grew 17%, its fastest rate in roughly four years. Cloud operating margin reached 30.1%. In the first quarter of 2026, agency checks pointed to conversion-rate growth of 13.7%, up from 6.6% a year earlier. We reference these datapoints not in isolation; rather, they are what the flywheel should look like if the same compute base is improving more than one revenue line at once.

The Search debate is also more nuanced than either side is allowing. The bear case has been right about one thing: AI Overviews are reducing click-through rates on informational and educational queries, with third-party work suggesting declines of roughly 20% to 30%. But those were never the core monetization surfaces. The more important point is that the categories where Google actually monetizes, shopping, travel, local services, and other bottom-funnel queries, look much more resilient than the broad disruption narrative implies. Commercial-intent share loss remains unproven, and the more relevant signal so far is improving matching and conversion rather than rising ad density. That makes monetization parity more credible than feared, though still short of fully proven. For a competitive comparison, see our full note on OpenAI an its revenue prospects.

Cloud Next sharpened the infrastructure side of the story. Google launched two eighth-generation TPUs built for different workload classes, with TPU 8t aimed at training and TPU 8i aimed at inference. That split gives a clearer read on how Google sees AI economics evolving, with training and inference increasingly treated as distinct infrastructure problems. As we have argued for some time, training and inference are not the same infrastructure problem. Inference is becoming more constrained by memory, latency, and coordination overhead, which is why Google emphasized BoardFly networking, HBM expansion, and “breaking the memory wall.” In our view, that is the deeper signal from Cloud Next. Google is positioning for the phase of AI where serving models efficiently across Search, Gemini, and enterprise agents matters as much as training them in the first place.

Our report also develops a point the market is still underweighting. Agentic AI is increasingly bringing CPUs and orchestration closer to the center of the stack. Triangulated estimates suggest that as these workloads scale, CPU attach becomes a more meaningful contributor to cloud revenue and margin over time. Axion is still early, but it no longer makes sense to treat it as immaterial.

The full report is narrower than a complete Alphabet sum-of-the-parts review and more expansive than the cloud-only ROIC debate. It is focused on the market’s most important current question: whether Google’s AI capital cycle is earning acceptable returns once those returns are measured across the surfaces the infrastructure actually powers.

What paid subscribers get in the full note:

• The full multi-vector ROIC framework, including the attribution logic behind why cloud-only return math understates the cycle

• The disaggregated Search durability section, including what is proven, what is still management claim, and where the real risk is concentrated

• A full Cloud Next infrastructure analysis on TPU 8t, TPU 8i, BoardFly, goodput, and what the split roadmap says about Google’s workload assumptions

• The Axion and CPU opportunity, sized as an emerging call option rather than a current run-rate business

• Bull, base, and bear valuation frameworks, with the variables most likely to move the debate over the next four quarters