Google’s TPU Strategy Offers a Clearer View of the Next AI Bottleneck

Why Google’s New TPU Strategy Carries More Strategic Weight Than a Routine Silicon Update

This note is not intended to be a chip-versus-chip architectural comparison, nor is it an attempt to rank Google’s latest TPU designs against competing silicon. That is a different exercise, and in many ways a less useful one at this stage. What we are more interested in is the logic behind Google’s design choices, because those choices offer a clearer view into how the company sees AI workloads evolving and where it believes custom silicon creates strategic leverage. In that sense, the announcement is useful less as a scorecard and more as a window into Google’s infrastructure philosophy. What stands out is a company designing around anticipated workload behavior two to three years ahead, and doing so from the vantage point of a tightly integrated stack that spans models, products, networking, systems, and data centers.

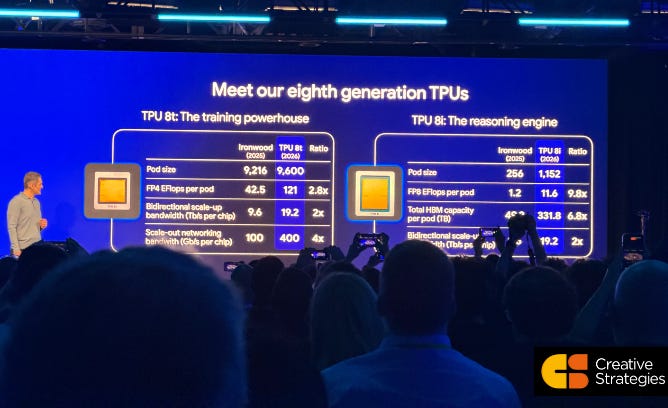

Google’s latest TPU announcement makes more sense when viewed through the underlying infrastructure question it is addressing. The market is still trying to frame AI infrastructure around a single curve of larger models, more compute, and more accelerators. Google is signaling a more developed view of where they believe the stack is headed. Training and inference are evolving into distinct infrastructure problems, each with its own bottlenecks, each with its own economic logic, and increasingly each with its own optimal silicon design. That is the larger takeaway from Google introducing two eighth-generation TPUs at once: TPU 8t for training and TPU 8i for inference. In our view, that design split offers a more useful window into Google’s competitive position than the raw performance figures alone.

What stands out to us is how deliberately Google tied the product story to workload behavior. TPU 8t is framed as the training “powerhouse”. TPU 8i is framed as the reasoning engine. Google is emphasizing that these are separately designed chips built for different use cases rather than variations of the same underlying product. That framing carries strategic significance because it reflects an internal judgment that the most valuable AI workloads are diverging in ways a single generalized design would serve less efficiently. Something we agree with, as we see specialization as a continued trend. As model development continues to scale, training still demands immense bandwidth, synchronization, and tightly coordinated compute. As AI products move into reasoning, agents, and real-time enterprise workflows, inference places increasing pressure on latency, memory capacity, and the network path between chips. Google is building for both trajectories at the same time.

The training side of this announcement extends a path Google has been on for years. TPU 8t preserves Google’s emphasis on giant pods and supercomputer-scale coordination while materially lifting the performance envelope of the system. On the slide shown during the presentation, Google compares Ironwood with TPU 8t and shows FP4 exaflops per pod increasing from 42.5 to 121, bidirectional scale-up bandwidth per chip rising from 9.6 to 19.2 Tb/s, and scale-out networking bandwidth moving from 100 to 400 Gb/s. Pod size also increases from 9,216 chips to 9,600. Those figures point to a familiar but still consequential objective. Google wants larger training domains with fewer communication penalties as model complexity rises. The practical outcome is that more of the theoretical compute becomes usable compute, which supports faster iteration on frontier models and a better chance of sustaining performance leadership at large scale.

The inference narrative was helpful because it reveals more about how Google views the next phase of AI deployment. TPU 8i is presented as a dedicated reasoning engine, and the slide language around it is revealing: “Breaking the Memory Wall,” “Accelerated Agent Processing,” “Boardfly Networking,” and “Cost Efficiency.” The points they are emphasizing tell us Google sees inference as a systems problem shaped by memory pressure, coordination overhead, and the need to keep response times low as model behavior becomes more dynamic. Reasoning and agentic workloads place heavier demands on infrastructure because the work extends beyond generating one response and increasingly involves multiple steps, more memory state, and more system coordination. As that becomes more common, the economics of inference depend increasingly on how efficiently the infrastructure handles those behaviors. And these inference economics will be one of, if not the, single biggest factor in ROIC.

The best example of that design philosophy is the network topology Google discussed for TPU 8i. The team explains that previous connectivity favored throughput, which aligned well with moving large quantities of data. Their newer inference design shifts attention toward minimum latency. Google describes a network topology called Boardfly that reduces the diameter of the network, which shortens the distance between chips. That is a technical choice with a direct product-level benefit. Lower network distance supports faster movement of information between compute nodes. Faster information movement supports lower end-to-end latency. Lower latency improves the usability of search, assistants, enterprise agents, and any workflow where responsiveness shapes user experience. The design decision is therefore easier to understand when mapped to the service layer. Google is tuning silicon and interconnect around the characteristics of reasoning workloads rather than simply scaling a training-oriented architecture into inference. Again, emphasizing optimizing the system, or specialized design for specific AI workloads, remains a strategic initiative.

The pod-level figures for TPU 8i reinforce the same point. Google shows pod size increasing from 256 chips on Ironwood to 1,152 on TPU 8i, FP8 exaflops per pod increasing from 1.2 to 11.6, and total HBM capacity per pod moving from roughly 49 TB to 331.8 TB. Those numbers suggest Google expects advanced inference to require substantially more clustered compute and materially more memory attached to the system. The broader implication is that inference capacity is becoming more tightly linked to memory architecture and interconnect design than many investors still assume. When Google references “breaking the memory wall,” it is pointing toward a familiar bottleneck in a new context. Model serving, especially for reasoning systems, becomes constrained not just by arithmetic throughput but by how much model state can be held and moved efficiently across the machine. More on memory in our report below.

That shift has strategic consequences for Google because many of its most important AI surfaces are increasingly inference-heavy. Search, AI Overviews, AI Mode, Gemini, productivity tools, enterprise assistants, and future agent-driven services all depend on serving models quickly and repeatedly at very large scale. Google says directly in the discussion that the value of AI increasingly comes from serving the model. That line deserves attention because it helps explain why a dedicated inference TPU deserves to be viewed as a core infrastructure investment rather than as a side branch of the roadmap. Training establishes capability. Inference determines how broadly that capability can be delivered, how efficiently it can be monetized, and how reliably it can be embedded across the company’s product portfolio.

The presentation also offered a useful reminder that Google is telling an infrastructure story rather than a chip story. The event begins with energy, data centers, cooling, racks, hardware, networking, software, models, and products. The TPUs sit inside that broader architecture. We think that framing is central to how Google wants investors to view its advantage. The company is not trying to win on component performance in isolation. It is trying to show that vertical integration allows it to shape the entire system around the workloads it sees emerging inside DeepMind, Search, YouTube, Ads, Gemini, and Cloud. That creates a design loop that few companies can replicate. When the organization building the models, the products, and the infrastructure are tightly linked, the hardware roadmap can reflect anticipated workload shifts two or three years ahead. Google says this explicitly when it describes the need to predict where these workloads are going before they become obvious externally.

This is where the announcement translates into competitive advantage. Many firms can purchase AI infrastructure. A smaller group can design some of it. An even smaller group can observe frontier workloads inside their own model teams, tune custom silicon for those workloads, deploy it across large internal products, and then expose that same infrastructure through cloud. Google occupies that narrower category. That creates leverage in several forms. It supports better utilization across internal and external demand. It improves the return on custom design investment because the technology can be amortized across many high-value workloads. It also allows the company to learn from production behavior at a scale that most enterprises and many model labs do not see firsthand. Over time, that feedback loop can compound into better system design and stronger service economics.

Another part of the discussion that deserves more attention is reliability. Google spends time on “goodput,” (we detail that in our NVIDIA report), which it defines as actual forward progress in computation rather than theoretical throughput. At the pod sizes and supercomputer scale Google is describing, the system is coordinating thousands or tens of thousands of chips. In that environment, chip failures are part of operating reality. Google explains that once systems reach that scale, the important engineering challenge involves detecting failures quickly, reconfiguring the system, and avoiding silent data corruption that can spread through the broader workload. That is an important window into the company’s infrastructure philosophy. What Google is ultimately delivering to its own services and to cloud customers is a production-scale system built to keep making forward progress at very large scale. Viewed from a strategic lens, it shapes actual cost, deployment efficiency, and service reliability in live environments.

Google suggests, and we agree, that agentic computing will bring general-purpose CPUs back into a more prominent role while specialization continues to deepen across AI infrastructure. That view fits with the broader direction implied by the TPU launch. The infrastructure stack is becoming more heterogeneous. Training remains specialized. Inference is becoming more specialized. Orchestration and agent execution will elevate the role of CPUs (and increase the ratio of CPU to GPUs per our models) and additional system components over time. The implication is that AI data centers are moving toward a more segmented architecture where the economic value comes from balancing specialized compute, memory, networking, and orchestration around specific workload classes. More on the CPU resurgence in our agentic CPU report.

For investors, the key issue is how to interpret this in competitive terms. Google’s latest TPU generation is best evaluated through the economics and performance of the workloads becoming most valuable across the AI stack, and through how much its integrated infrastructure approach improves both. We think this announcement suggests that it does. The split between TPU 8t and TPU 8i shows a company designing around where demand is moving rather than around a generic view of compute growth. It shows an infrastructure organization translating technical insight into service-level outcomes. It also shows a company that is committed to custom silicon as one part of a broader systems advantage spanning networking, software, reliability, and product integration. That combination gives Google a stronger position in AI than the market often credits, particularly when the debate shifts from who can train frontier models to who can deliver them efficiently across products used by billions of people and enterprises alike. The serving of models at scale, the inference era, is upon us.

The larger takeaway is that Google is shaping AI infrastructure around a more mature understanding of the workload mix ahead. TPU 8t extends its training ambitions. TPU 8i brings more explicit architectural focus to reasoning and agentic inference. Together, they reflect a company designing for the next phase of AI deployment rather than reacting to the last one. For a market still looking at AI silicon through a mostly generalized lens, that is a useful signal about where the next layer of competitive separation may emerge.

Full report tomorrow for subscribers on Google growth thesis.

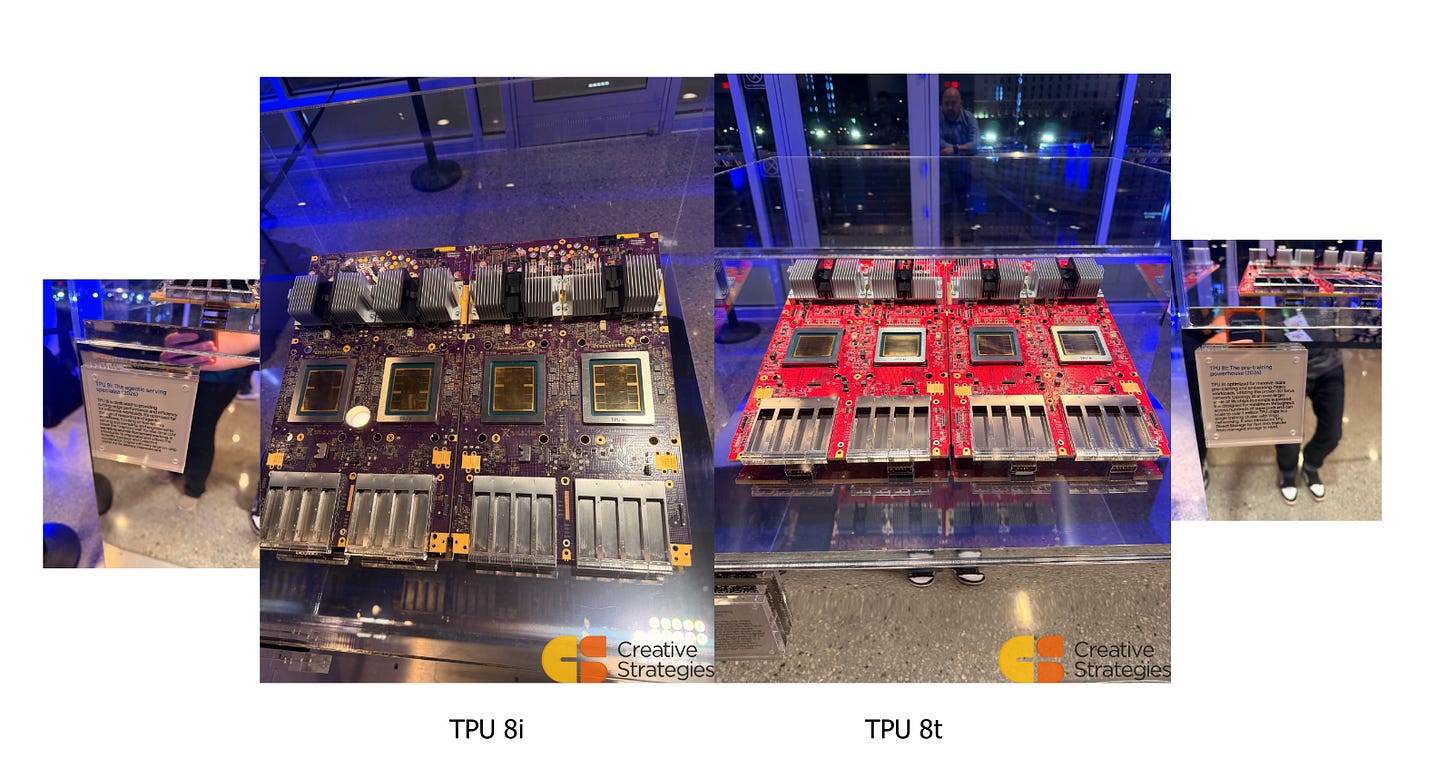

For now, some eye candy.

The Diligence Stack delivers analyst-grade intelligence on the companies reshaping the technology landscape. We analyze the full stack—from silicon to software to business model—to reveal what drives real differentiation. For investors, operators, and technical leaders who need to understand strategy, competitive advantage, and whether the technical foundations beneath them are built to last.

Click below to subscribe.