Liquid Cooling: The Thermal Prerequisite for AI Infrastructure Scale

Prerequisite: 800 VDC: The Inflection Point Reshaping Datacenter Power and AI Infrastructure (March 2026). This report builds directly on that analysis and assumes familiarity with the power architecture transition it describes.

The Thesis

Our view is that liquid cooling has become just another in a long line of deployment gating factors for next-generation AI compute. The GPU power roadmap is the biggest driver of this shift. For two decades, server racks ran at 5 to 20 kilowatts, and air conditioning handled the heat without difficulty. A single GB200 NVL72 rack runs at roughly 120 kilowatts, six to twenty times that historical baseline and well past the roughly 40 to 50 kilowatt threshold where air cooling becomes increasingly uneconomic without hybrid or liquid assist. The current GPU generation already operates in territory where liquid is the only viable primary thermal architecture. Vera Rubin pushes further in a fully liquid-cooled, fanless tray design. Our supply-chain checks suggest subsequent platforms push rack power considerably higher still. The gap between GPU thermal requirements and air-cooling capacity widens with every generation.

Most of the market is still treating liquid cooling as a component upgrade, and we think that framing understates the actual constraint. Liquid cooling is the thermal gating factor on how much next-generation compute gets deployed. If the cooling infrastructure is not ready, the GPUs do not get installed. That single dependency changes how the TAM should be sized, how the supply chain should be valued, and which companies hold positions that matter for earnings durability.

What We Found

The economics of liquid cooling at the rack level have shifted in ways that most models have not absorbed. We estimate that cooling content per GB200-class rack now exceeds power content per rack by a factor of roughly two to three, reversing the historical relationship where power infrastructure was the dominant cost line in datacenter builds. The subscriber section breaks down the building blocks of that estimate, from tray-level cold plate content through shared CDU and plumbing infrastructure, and reconciles our full-stack TAM framework against industry equipment forecasts from firms like Dell’Oro. The TAM story is larger than most investors expect, but only when you define the scope correctly.

Beyond the volume ramp, three structural shifts are reshaping the competitive landscape simultaneously. The CDU architecture is moving from sidecar to in-row configurations, changing the plumbing topology of the datacenter and which suppliers benefit. A new technology layer, micro-channel lids, is emerging at the chip-package level and creating a competitive surface that did not exist twelve months ago. And a consolidation wave exceeding $15 billion in disclosed and estimated deal value has swept through the sector as legacy HVAC and electrical infrastructure companies acquire liquid cooling capabilities they cannot build organically in the time the market requires. We detail each of these shifts, the companies positioned at the center of them, and the specific milestones that would change our view in the subscriber section.

The Power-Cooling Convergence

The liquid cooling transition and the 800 VDC transition we analyzed in Part 1 are co-dependent. More efficient power delivery reduces the waste heat that cooling systems must handle. Denser liquid-cooled racks justify the capital investment in high-voltage DC distribution. The AI datacenter of the future is being co-designed around both systems as a single integrated infrastructure, and the companies that can deliver across both power and cooling hold a structural advantage over point-product suppliers. The subscriber section quantifies that convergence and identifies which companies span both layers.

What subscribers get in the full report:

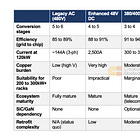

Cooling technology comparison. Air, rear-door heat exchangers, direct-to-chip, and immersion rated across six dimensions including viable rack density, PUE, and GPU roadmap alignment

GPU-roadmap adoption timeline. Three-phase framework from GB200 through Vera Rubin through Rubin Ultra, with density thresholds, CDU architecture shifts, and MCL adoption milestones

Facility-level TCO analysis. Capex per megawatt, operating cost savings, payback periods, and a full rack-level content bridge breaking out tray content, shared CDU/plumbing allocation, and power versus cooling spend per rack

Layered TAM waterfall. Rack-level versus full-stack sizing from 2024 through 2030, with explicit scope notes reconciling our estimates against Dell’Oro and other industry forecasts

Full-stack company scorecard. Nine companies across three tiers (component specialists, system suppliers, infrastructure integrators), with share estimates, margin profiles, key risks, and the specific conditions that would drive estimate revisions for each name

M&A tracker. Eight deals totaling $15 billion+ in disclosed and estimated value, with deal structures, strategic logic, and remaining private acquisition targets

Catalyst tracker. Eight specific milestones we are monitoring, from Vera Rubin volume ramp and MCL qualification timing to CDU lead-time normalization and first major brownfield conversions

Risk framework. Four scenarios that would change our view, from cold plate commoditization and CDU supply bottlenecks to immersion cooling disruption and operational reliability failures, each with specific trigger conditions

800 VDC convergence analysis. How the liquid cooling transition co-depends on the power architecture shift we covered in Part 1, and which companies hold positions that span both layers