From Model Wars to Platform Wars

Who Owns the Enterprise AI Control Plane?

This report is the natural follow-on to our recent note, From AI Usage to AI Earnings Power.

That note argued that the first investable AI cycle is forming inside dense workflows where work is repeated, measured, permissioned, and close to execution. Customer ROI is the bridge from usage to monetization, and the durability of AI software, model, and infrastructure spend depends on whether AI changes the economics attached to a repeated unit of work.

This report takes the next step. If customer dollars begin moving inside those workflows, the value-capture question becomes which layer of the enterprise stack captures them as deployments convert from pilot to production. The platform competition is the immediate consequence of the AI ROI discussion. Platform control follows budget formation.

The model race still remains for capability and lab-layer unit economics. The customer relationship increasingly depends on the interfaces that own permissions, telemetry, audit, and the budget conversation once agents do real work.

From ROI Proof To Platform Control

Our prior report focused on which workflows justify enterprise AI spend. This report focuses on who captures the economics once those workflows move into production.

Three points connect the two reports. AI ROI evidence is forming inside a more specific set of workflows than the broad enterprise AI narrative implies, and those workflows share a baseline, a budget owner, and a measurable economic unit of work. They are also where execution, permissioning, and audit are most concentrated, which makes them the workflows where platform position is most contested. Value capture follows the layer that proves and prices the completed work. Model supply and interface ownership also matter, but they do not automatically determine where the durable economics settle. Usage and revenue can grow at different layers at the same time, and the long-duration economics tend to accrue to the layer the customer associates with budget movement.

The Three Control Points

We map the value-capture question across three layers. The model, runtime, and interface layer is where foundation model vendors compete to become the default intelligence behind agentic workloads and, increasingly, the entry point where user intent enters the system. The orchestration and workflow layer turns model calls into completed business outcomes. The system-of-record and governance layer owns identity, entitlement, approval, and audit. Economics do not always accrue where compute is consumed. They accrue where enterprises standardize the system of action.

OpenAI is executing a horizontal strategy built on distribution and intent capture, and the test is whether horizontal usage becomes recurring workflow ownership. Anthropic is executing a narrower production-credibility strategy, with coding as the strongest current ROI wedge, and the test is whether that wedge travels into other measurable-ROI workflows. Neither answer is fully proven against the workflow evidence we have so far. See our deep dives on both companies.

Why Coding Is The Operational Laboratory

Coding is the first workflow where AI ROI, workflow ownership, and governance bottlenecks are visible at the same time. The bottleneck has moved from authoring to review, integration, security, and policy. We treat coding as the live laboratory for how the value-capture question is likely to evolve in legal, finance, customer operations, regulated servicing, and analytics, not as the full market.

Three Cross-Cutting Themes

Pricing is moving from seats toward hybrid seat, action, and outcome models that price the economic unit of work. Enterprises are multi-model by design and consolidating by workflow, which is not the same thing as consolidating by vendor. The labor mix is moving toward a new role we describe as the AI orchestrator.

The market can support more than one winner across layers. The model, runtime, and interface layer likely supports two to three franchises with operating credibility. The orchestration and workflow layer likely supports a handful of category leaders. The governance layer should consolidate toward a smaller set of platforms that own identity, entitlement, and audit. Reading the question as a binary between OpenAI and Anthropic risks missing where customer ROI dollars are accumulating inside the workflows we tracked in the prior note. The next question is whether buyers are ready to fund those workflows at scale, which is where our CIO/CTO survey work picks up next week.

What Paid Subscribers Get In The Full Report

• Our three-layer map of the enterprise AI control plane, with the criteria we use to judge which layer captures lasting margin in each category.

• A direct mapping from the AI ROI evidence in the prior report to platform position, including which workflows are most likely to define the next wave of value capture.

• A variant-perception section: what consensus believes, what is underappreciated, and where this report differs.

• A side-by-side read on OpenAI and Anthropic, sharpened around distribution and intent capture versus production credibility and utility economics, with the conditions that would cause us to reweight each.

• The counterthesis on workflow and system-of-record incumbents, with a named list of vendors we view as structurally advantaged in the agentic cycle once ROI is proven.

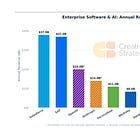

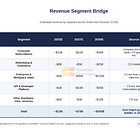

• Our triangulated view of frontier-lab revenue, enterprise penetration, workload share, and growth, expressed as ranges rather than point estimates and marked as triangulated rather than company-guided.

• An expanded read on Claude Code and coding agents, including what ROI, bottleneck migration, pricing dynamics, and workflow ownership tell us about where the next high-value workflow lands.

• Pricing architecture in practice, with the seat, action, outcome, and work-unit combinations we are seeing across CRM, ITSM, developer, and security suites.

• The buyer behavior view, including why multi-model by design does not prevent consolidation at the workflow layer.

• Token economics and unit cost curves, including how we think about deflation, call volume, and gross margin trajectory for model vendors and platforms.

• Labor and org design, including the AI orchestrator role, the change in junior hiring, and the productivity and quality tradeoffs we see in main-branch work.

• The security and governance read, including why AI-generated code failure rates matter for enterprise budget decisions and how that lesson generalizes to adjacent workflows.

• A diligence approach for evaluating any company in the stack, with the questions we ask and the red flags we weight most heavily.

• A twelve-month watch list across labs, platforms, incumbents, and private companies, with specific and measurable signals.

• What must be true for OpenAI, Anthropic, and the counterthesis to each hold, expressed as testable conditions rather than narratives.

• What would make us wrong, expressed as explicit falsification conditions with observable signals investors can track.

• The value-capture implications for public platforms, private labs, and the enterprise software stack, expressed as analytical weighting and indicators to track.